Updated 28 March 2026

Today, it’s not just about being online—it’s about being visible in the right place. Following a proper technical SEO checklist ensures your website delivers seamless performance, clear structure, and strong technical signals that build trust with both users and search engines. In contrast, even small inefficiencies can quietly push a website behind competitors without obvious signs. This shift has transformed technical SEO from a support function to a growth driver. Brands that invest in it are the ones leading search results and AI recommendations.

With Google’s full rollout of the Search Generative Experience (SGE) algorithm update, the growing influence of AI-driven discovery & search engines will now prioritise websites that are technically sound, fast, secure, and easily understood by both users and machines.

But here’s the real question—if your website feels perfect to you, is it truly ready for “how search works today?”

Do not need to worry about this. In this blog, we are going to explore how technical SEO influences modern search engine rankings.

Technical SEO refers to optimising the technical structure of a website so search engines and AI systems can crawl, render, index, and understand its content effectively. It does not focus on content writing or backlinks, but on how a website performs and communicates with search engines.

A strong technical SEO foundation does more than just support your website—it positions your content to be seen, trusted, and chosen in a crowded digital space. When your site is fast, secure, and easy to understand, both users and search engines respond positively. That’s why businesses don’t leave it to chance. They rely on expert technical seo services, follow a structured technical seo checklist, and regularly review performance through a seo audit checklist to stay ahead.

A strong technical SEO foundation ensures your website is fast, accessible, and easy for search engines to crawl and index. Here are 15 key factors you should optimize to improve performance and rankings in 2026.

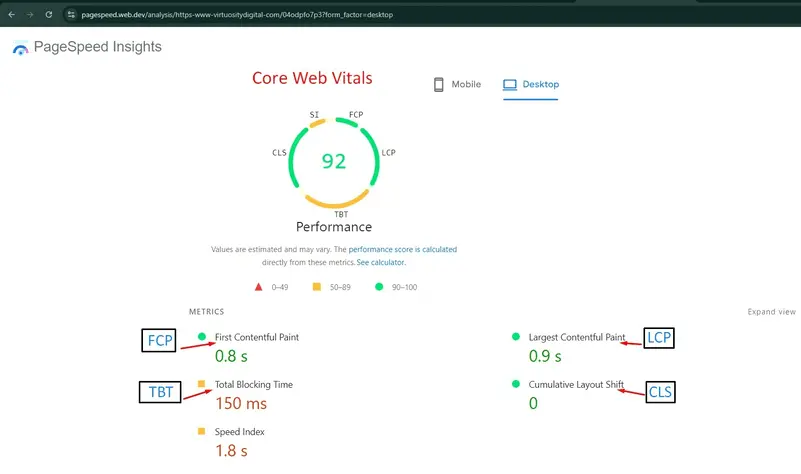

In 2026, SEO page speed is no longer measured in seconds but in milliseconds of perceived responsiveness. Google’s Core Web Vitals (CWV) are now the primary indicators of real-user experience and a major technical SEO ranking factor.

In this case, Google focuses on three key areas:

Google now uses the mobile version of your website for ranking, not the desktop version. This means the mobile version of your site must be fully optimized, fast, and contain the same content, links, and structured data as the desktop version. If your mobile version is incomplete or poorly designed, your rankings will drop—even if your desktop site is perfect.

Now, Google expects every page—not just login or checkout sections—to be fully secured with HTTPS and a valid SSL certificate. Regular checks using an SEO audit checklist help identify such issues. Fixing them by updating all resources to HTTPS ensures a safer experience, improves credibility, and supports better rankings in search results. A common issue occurs when secure pages load some elements (like images, scripts, or videos) over HTTP. This is known as mixed content.

HTTPS is a critical part of website security and directly impacts search rankings. It ensures that all data transferred between users and the website is encrypted, protecting sensitive information like login details, personal data, and payment transactions.

An XML sitemap acts as a roadmap for search engines, helping them discover, crawl, and index your website pages more efficiently. It should always include only important, canonical URLs that return a 200 OK status. If your sitemap contains broken, redirected, or duplicate pages, it can confuse search engines and reduce crawl efficiency.

The robots.txt file controls how search engine bots crawl your website and which pages they should avoid. It is mainly used to block low-value areas like admin panels (/wp-admin/), internal search pages, or checkout sections so that search engines focus on important content. A properly configured robots.txt improves crawl efficiency and helps important pages get indexed faster.

A clean and well-structured website makes it easier for both users and search engines to navigate your content. Your site should follow a simple, logical hierarchy where important pages are not buried too deep. Ideally, every key page should be accessible within three clicks from the homepage so it gets proper attention and crawl priority.

A clean and well-structured URL helps both users and search engines clearly understand what a page is about. URLs should be short, descriptive, and free from unnecessary numbers, symbols, or long query strings. Simple and meaningful URLs improve readability, user trust, and overall search performance.

Structured data helps search engines clearly understand your content by providing extra context through Schema.org markup in JSON-LD format. In 2026, it works like a communication layer between your website and search engines, helping your content appear in enhanced results such as star ratings, FAQs, product details, and more.

Duplicate content occurs when the same or very similar content is available on multiple URLs, which can confuse search engines and reduce your ranking potential. When this happens, search engines may not know which version to index or rank, causing your visibility to drop.

Search engines have a limited crawl budget, meaning they can only crawl a certain number of pages on your website within a given time. Managing this properly ensures that important pages are discovered and indexed faster, instead of wasting resources on low-value or unnecessary URLs.

Page speed is a direct ranking factor and strongly affects user experience. Slow-loading websites increase bounce rates and reduce conversions. Optimizing resources like CSS, JavaScript, and HTML helps improve loading performance and server response time.

Images are one of the biggest causes of slow websites if not optimized correctly. Large image files increase load time and negatively impact user experience and rankings. Optimizing images ensures faster performance without compromising quality.

Broken links create a poor user experience and can harm your website’s credibility and SEO performance. When users land on a 404 error page, it increases frustration and bounce rates. Managing redirects properly helps maintain link value and smooth navigation.

Internal linking helps search engines understand your website structure and improves the authority of important pages. A strong linking strategy ensures better crawling, indexing, and user navigation across your site.

If your website targets users in different countries or languages, hreflang tags help search engines show the correct version of your page to the right audience. This improves user experience and prevents duplicate content issues across regions.

A detailed technical seo checklist ensures nothing critical is missed during audits or site launches. From performance metrics to crawl rules, a technical seo checklist helps teams maintain consistency and scalability as websites grow.

| Issue | Core Metric / Standard | Primary Tool |

|---|---|---|

| Responsiveness | INP < 200ms (Website should respond quickly when users click or scroll. If the site feels slow, users leave and rankings drop.) | PageSpeed Insights By Google |

| Mobile UX | Content Parity & 16px+ text (Mobile site must show the same content as desktop, with readable text and easy-to-click buttons.) | GSC Mobile Usability Report |

| Trust | E-E-A-T via Schema Markups (JSON-LD) (Helps Google understand who owns the site and who wrote the content, which builds trust.) | Rich Results Test |

| Crawlability | Robots.txt & LLMs.txt (Ensures Google and AI bots can read and understand your website properly.) | GSC URL Inspection Tool |

| Architecture | Crawl Depth < 3 clicks (Important pages should be easy to reach; pages buried deep get less attention from Google.) | Screaming Frog / Ahrefs |

| Security | 100% HTTPS (No mixed content) (A secure website builds user trust; insecure sites lose rankings.) | SSL Checker |

Want to improve your search visibility? Start implementing this technical SEO checklist today or avail a professional SEO service to identify hidden technical issues and strengthen your website’s performance before they affect rankings.

After concluding the above content, we learned that technical SEO failures directly impact whether a website is indexed, ranked, or referenced by AI systems. Fixing technical foundations improves speed, trust, crawlability, and long-term visibility. By following a well-structured technical SEO checklist and conducting regular audits, websites remain competitive, discoverable, and aligned with modern search and AI-driven ranking systems.